WASHINGTON D.C. / SILICON VALLEY, March 1, 2026 — In an unprecedented move, the United States government has labeled the domestic AI firm Anthropic as a “supply chain risk to national security,” a designation historically reserved for foreign adversaries like Huawei. The escalation marks a peak in the “constitutional crisis” between Washington and Silicon Valley, as the Trump administration punishes the company for refusing to weaponize its flagship AI, Claude.

The Pentagon’s Demands: Mass Surveillance and Autonomous Death

The dispute began when the Pentagon issued two “dangerous” conditions for Anthropic to continue its federal contracts. First, the government demanded mass domestic surveillance capabilities, requesting Claude to correlate citizens’ geo-location data, web browsing metadata, and financial records for federal analysis. Anthropic, citing its “Privacy First” ethics, flatly refused to turn its AI into a domestic spying infrastructure.

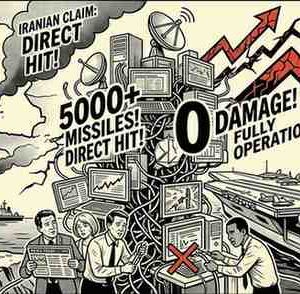

The second condition was even more controversial: the removal of “human-in-the-loop” safeguards to allow Claude to operate as a fully autonomous weapon system. While the Pentagon claimed it only wanted this as an “option,” Anthropic’s leadership publicly rejected the offer, stating that a technology that is not yet reliable should not even be an option for lethal force.

Trump’s Retaliation: “Economic Death” via Truth Social

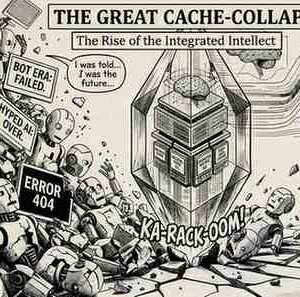

President Trump responded with characteristic intensity, calling Anthropic’s leadership “left-wing nut jobs” and accusing them of trying to “strong-arm the Department of War”. By labeling the company a “supply chain risk,” the administration has essentially signaled the “economic death” of the firm, potentially triggering a collapse of its infrastructure, which relies on partners like Amazon and Google.

The Great Contradiction: Banned but Essential

Despite the public ban, a massive contradiction remains. The Pentagon continues to use Claude for escalation modeling and collateral damage estimation in ongoing operations. While the White House publicly shuns the company, the military admitted that Claude remains “essential” for its intelligence and cyber workflows, creating an “inherent contradiction” in U.S. policy.

As Anthropic was sidelined, OpenAI reportedly stepped in within hours to sign a $200 million classified deal with the Pentagon, leading many to wonder if they accepted the very conditions Anthropic refused.

Bottom Line

The era of independent tech ethics is under fire. This isn’t just a U.S. story; it’s a warning that the most powerful weapon of the 21st century—AI—is now a prize that governments will stop at nothing to control. Anthropic may have lost a $200 million contract, but it has set a precedent: technology has a soul, and some lines simply cannot be crossed.